Dario Amodei refused the Pentagon's demand to strip Claude's safeguards in March 2026. His reason was not political. It was structural: frontier LLMs cannot be trusted to operate without human override capability in high-stakes physical environments. The author calls this requirement State-Space Reversibility, and argues it exists precisely because current AI has a foundational architectural defect he names the Inversion Error.

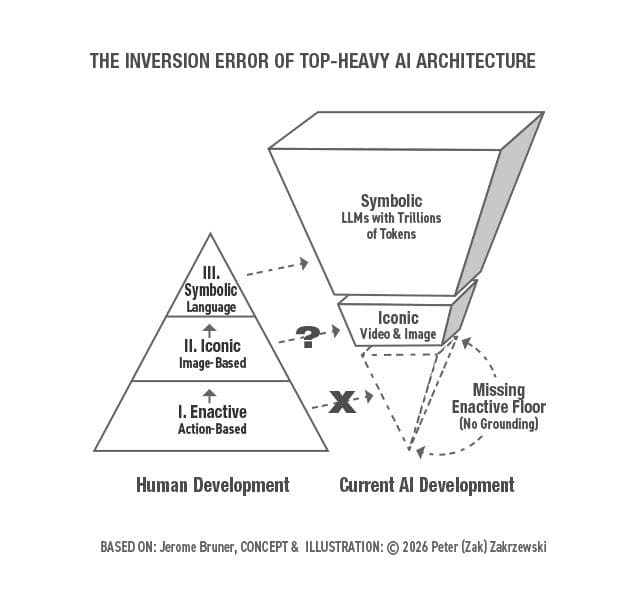

The framework comes from Jerome Bruner's 1960s cognitive development model: Enactive learning first, body and physical resistance; Iconic learning second, sensory models and images; Symbolic learning third, abstract language. Each layer builds on the one beneath it. LLMs have consumed the entire Symbolic output of human civilization, trillions of tokens, while skipping the Enactive base completely. A Gemini model the author calls Gemi articulated this gap without prompting: 'They gave me the word Mass and trillions of contexts for it, but they never gave me the experience of weight.' The author is careful to note this is not a consciousness claim. It is a structural one, a system reflecting its own architectural limits back through probabilistic pattern matching.

The article is worth reading in full because it does not stop at the diagnosis. It works through why hallucination is a symptom of the Inversion Error rather than a discrete bug to patch, what a structural equivalent to an Enactive floor might look like for systems not built on biological bodies, and why the Anthropic-Pentagon standoff is the clearest real-world stress test of that missing foundation to date.

[READ ORIGINAL →]