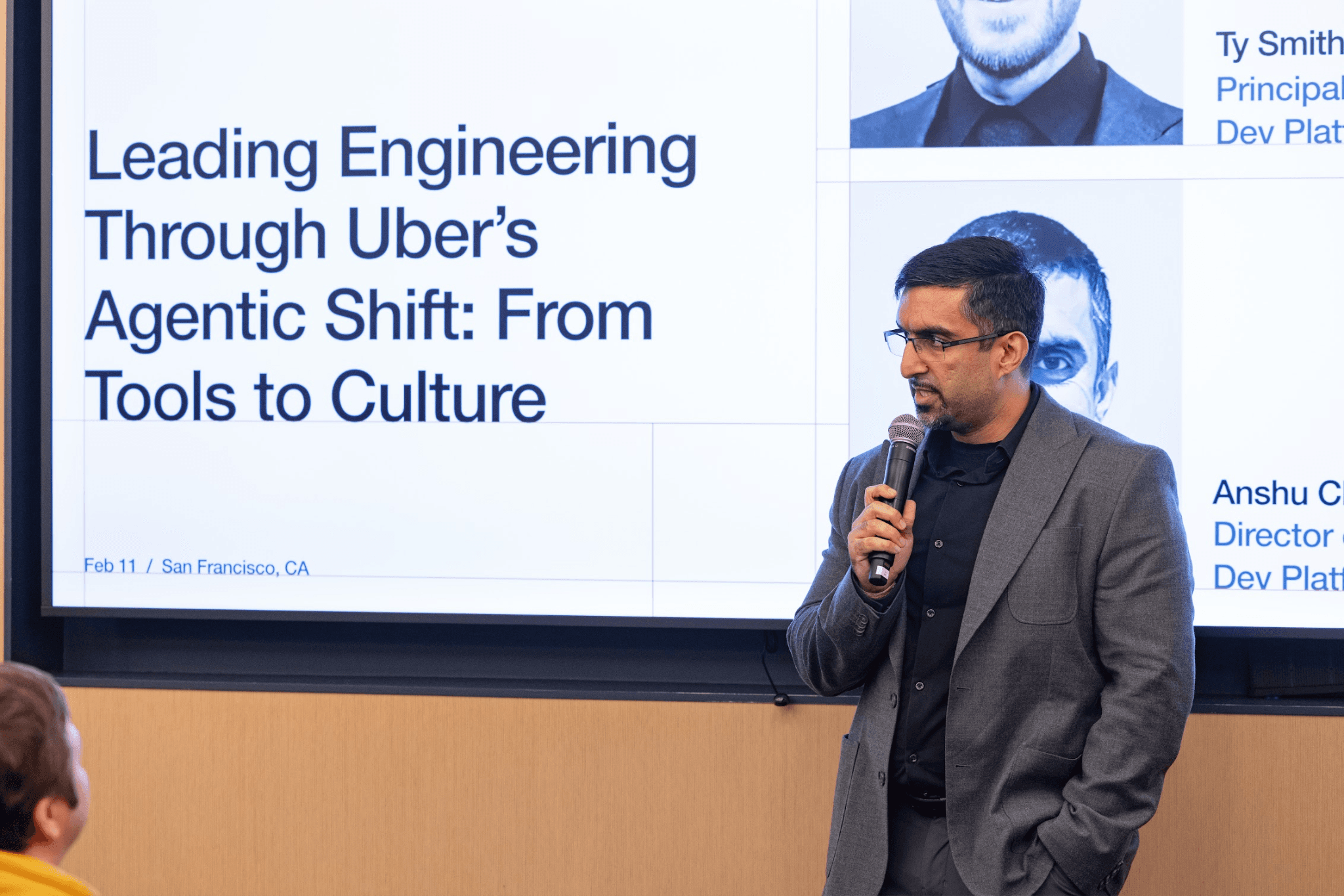

Uber's AI costs are up 6x since 2024. That number, disclosed at the Pragmatic Summit in San Francisco by principal engineer Ty Smith and director of engineering Anshu Chadha, is the honest counterweight to the headline metrics: 92% of Uber's nearly 3,000 engineers use AI agents monthly, 31% of code is AI-authored, and 11% of pull requests are opened by agents. The cost spike is a direct consequence of engineers doing what comes naturally: spinning up more parallel agents instead of writing single-threaded code.

To manage the sprawl, Uber built an internal AI stack from scratch. It spans four layers: an internal platform, context sources, off-the-shelf industry tools, and specialized agents. The proprietary pieces include an MCP Gateway, an Agent Builder, the AIFX CLI, and Minion, a background agent platform with monorepo access and optimized defaults. More AI-generated code created a review bottleneck, so Uber also shipped Code Inbox for smart PR routing, uReview for high-signal automated review comments, Autocover for generating over 5,000 unit tests per month, and Shepherd for end-to-end migration management.

The full session is worth reading for two reasons. First, Smith and Chadha are candid about what did not work: top-down mandates moved adoption slower than peer-to-peer sharing of wins. Second, the architecture decisions behind Minion and the MCP Gateway reveal how a large monorepo shop actually operationalizes agentic workflows at scale, which is a problem most engineering orgs are about to face and have not solved.

[READ ORIGINAL →]