Developer tool consolidation is over. Eighteen months ago, GitHub Copilot and ChatGPT dominated every stack. Today, 10 companies surveyed across team sizes from 5 to 1,500 engineers show a fractured landscape: Cursor, Claude Code, Codex, Gemini CLI, CodeRabbit, Graphite, and Greptile all have real adoption, and the Copilot-to-Cursor-to-Claude Code migration path is now well-worn.

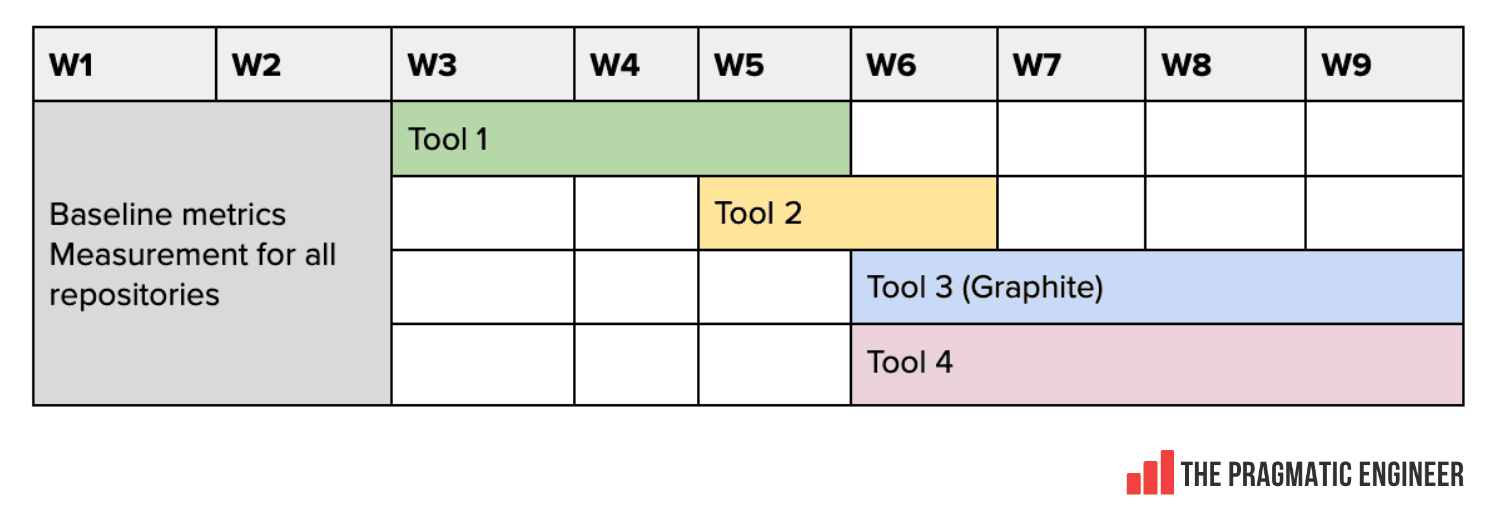

The selection methods differ sharply by company size. Teams under 60 engineers run informal two-week trials driven by developer sentiment, not metrics. A 5-person seed-stage startup dropped Korbit after a week because engineers ignored its suggestions, then adopted CodeRabbit because developers actually acted on it. Teams above 150 engineers face security reviews, compliance gates, and executive budget cycles that slow everything down. Wealthsimple ran a 2-month formal selection process before rolling out Claude Code, with CTO conviction validated by Jellyfish usage data. WeTravel built a structured scoring rubric: five engineers rated roughly 100 AI code review comments across five dimensions on a negative-3 to positive-3 scale, and found no tool good enough for their codebase. A large unnamed fintech ran Copilot, Claude, and Cursor simultaneously across 50 pull requests and scored 450 comments: Cursor ranked most precise, Claude most balanced, Copilot most quality-focused.

The measurement frameworks are where this piece earns a full read. Every team acknowledges that lines-of-code-generated metrics are distrusted by engineers and meaningless as proxies for productivity, but nobody has found a replacement that works at scale. The WeTravel scoring system and the fintech's head-to-head PR evaluation are the most rigorous methodologies documented publicly. If your team is currently choosing between tools without a structured evaluation framework, this article gives you two working templates to steal.

[READ ORIGINAL →]